Quick Reference

| Location | What it shows |

|---|---|

| Dashboard → QA View | Most flaky tests in the selected period |

| Dashboard → Developer View | Flaky tests by the author |

| Analytics → Summary | Flakiness trends over time |

| Test Runs → Summary | Flaky counts by category |

| Test Case → History | Stability percentage |

| Specs | Flaky rate per spec file |

How is flaky test detection activated?

Flaky test detection activates automatically when retries are enabled in Playwright. No additional configuration required.playwright.config.ts

How does TestDino detect Flaky tests?

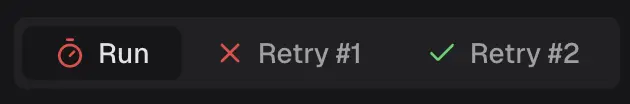

TestDino identifies flaky tests in two ways:- Within a Single Run

- Across Multiple Runs

A test that fails initially but passes on retry is marked flaky. The retry count appears in the test details.

Both detection methods indicate that the test result depends on something other than your code.

Where to find flaky tests?

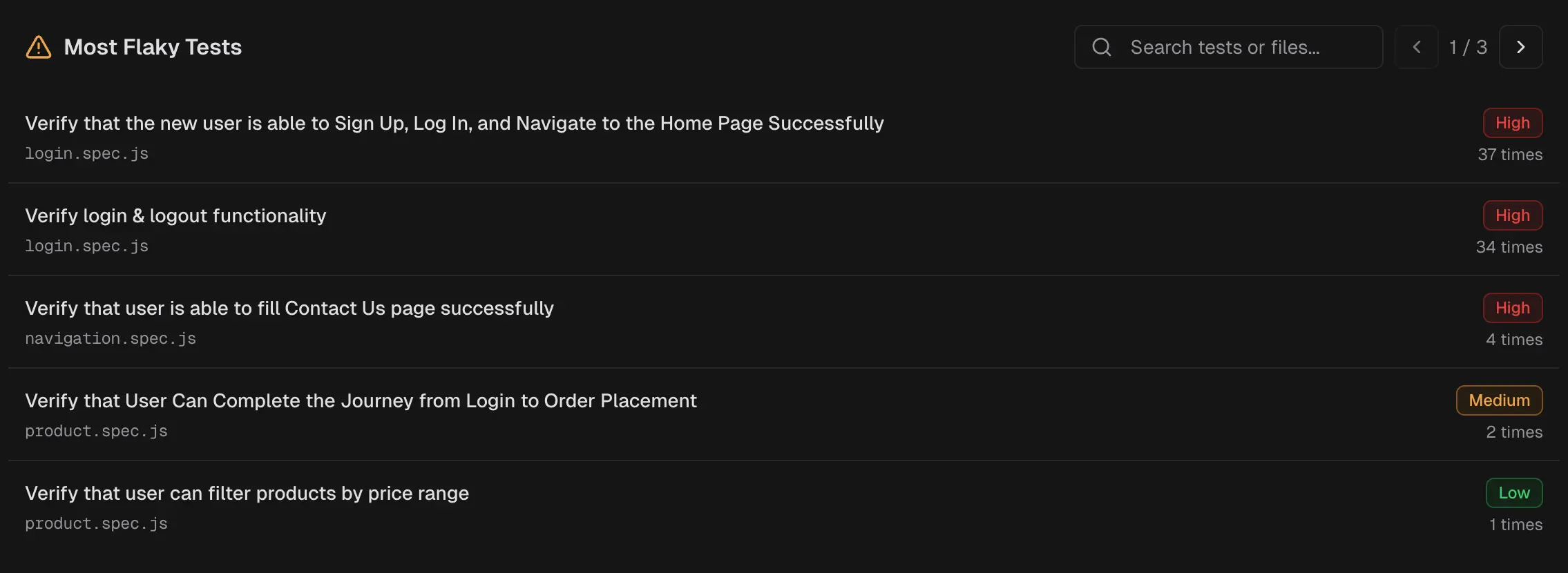

Dashboard → QA View → Most Flaky Tests Lists tests with the highest flaky rates in the selected period. Analytics → Summary → Flakiness & Test Issues

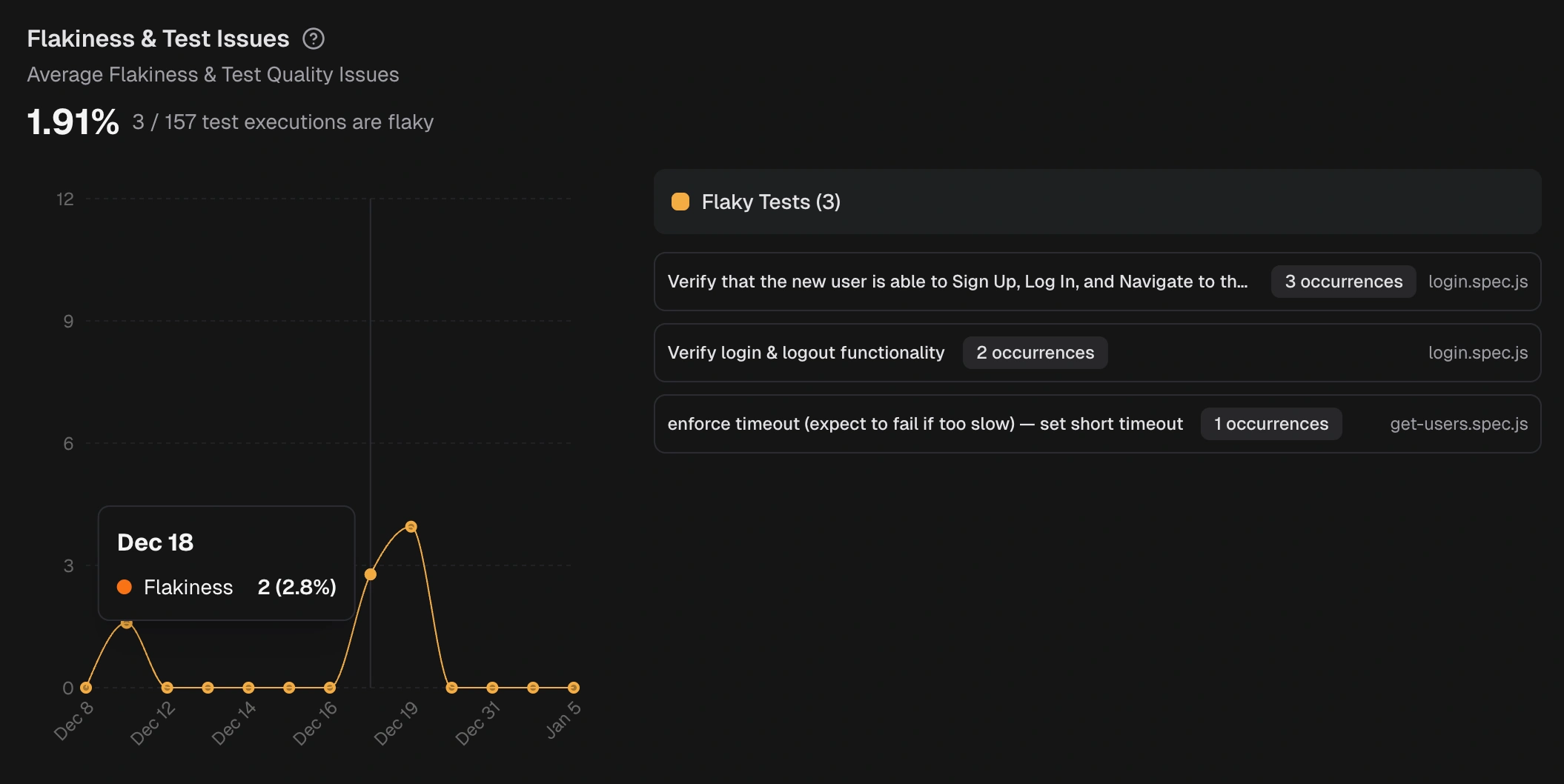

Displays flakiness trends over time with a list of affected tests.

Analytics → Summary → Flakiness & Test Issues

Displays flakiness trends over time with a list of affected tests.

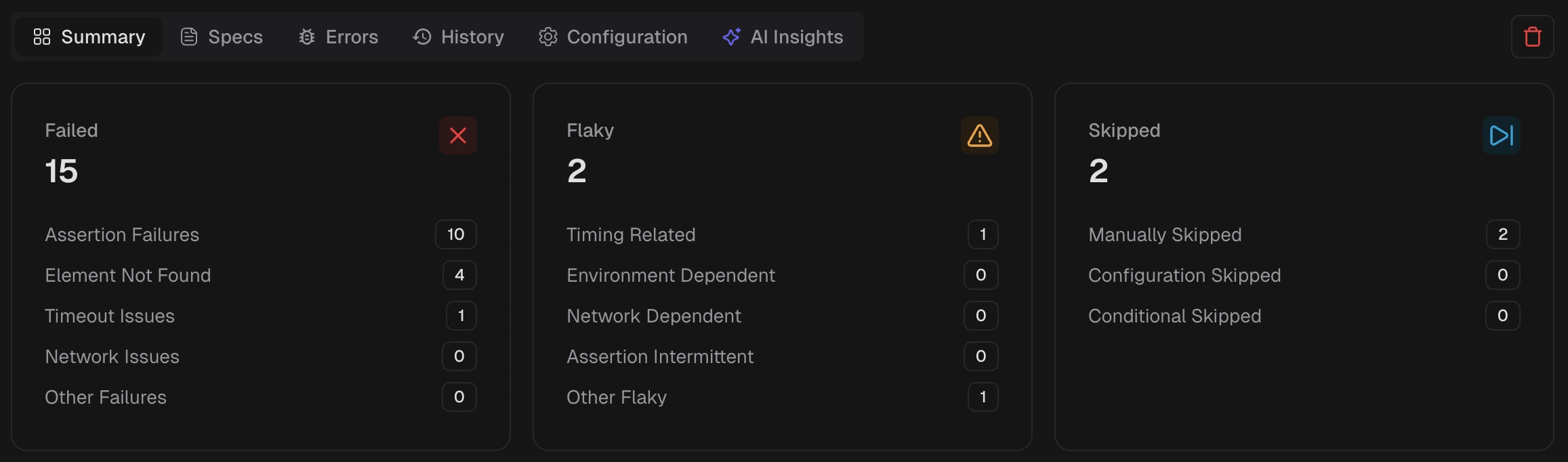

Test Runs → Summary

Each run shows flaky test counts with sub-categories: Timing Related, Environment Dependent, Network Dependent, Assertion Intermittent, and Other.

Test Runs → Summary

Each run shows flaky test counts with sub-categories: Timing Related, Environment Dependent, Network Dependent, Assertion Intermittent, and Other.

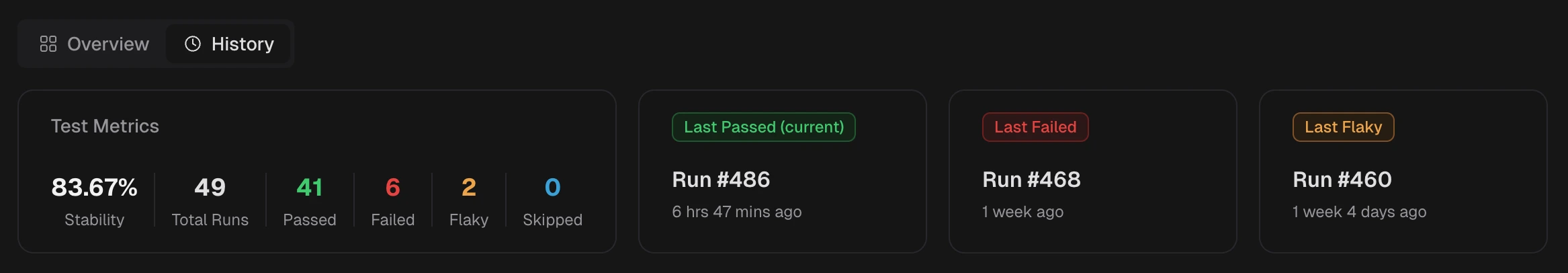

Test Case → History

Shows stability percentage and execution history for a single test.

Test Case → History

Shows stability percentage and execution history for a single test.

Flaky Test Categories

TestDino classifies flaky tests by root cause:| Category | Description |

|---|---|

| Timing Related | Race conditions, order dependencies, and insufficient waits |

| Environment Dependent | Fails only in specific environments or runners |

| Network Dependent | Intermittent API or service failures |

| Assertion Intermittent | Non-deterministic data causes occasional mismatches |

| Other | Unstable for reasons outside the above |

- Using fixed waits instead of waiting for the page to be ready

- Missing await, steps run out of order

- Weak selectors, element changes, or matches more than one thing

- Tests share data and affect each other

- Parallel runs collide, same user or record used by multiple tests

- Slow or unstable network or third-party APIs

- CI setup is different from local run