Documentation Index

Fetch the complete documentation index at: https://docs.testdino.com/llms.txt

Use this file to discover all available pages before exploring further.

What you’ll learn

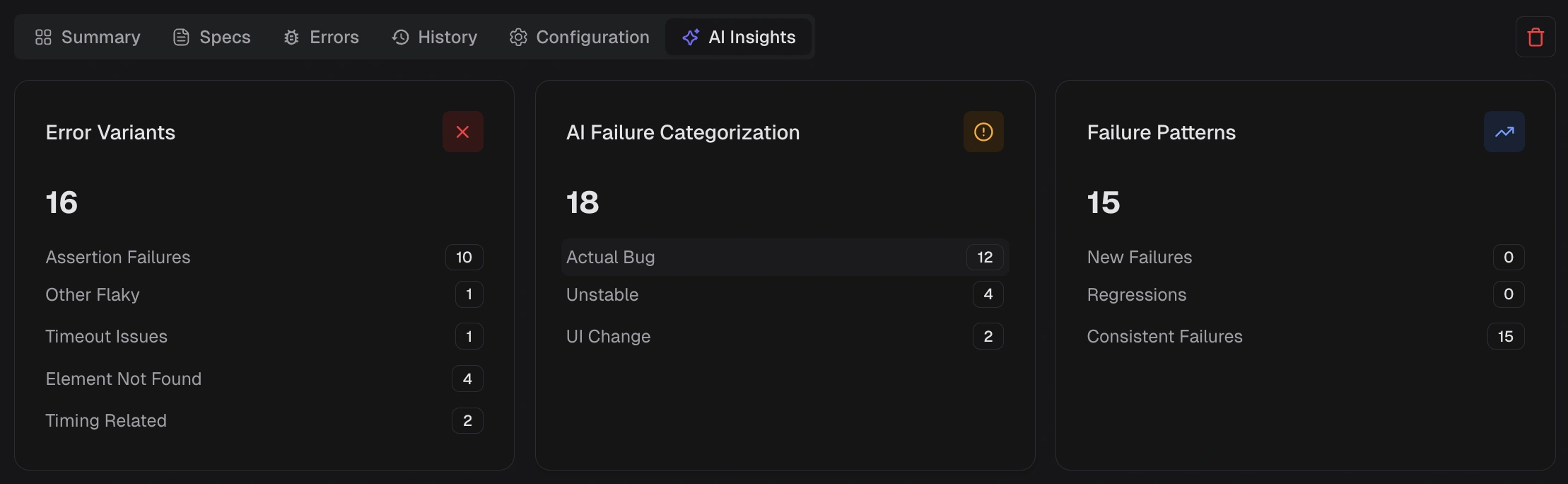

- How AI classifies test failures into categories (Bug, UI Change, Unstable, Misc)

- How failure patterns and error grouping work across runs

- How to connect AI assistants to your test data via MCP

Quick Reference

| Feature | Where | What it does |

|---|---|---|

| Failure Classification | Test runs, test cases, dashboard | Labels failures as Bug, UI Change, Unstable, or Misc |

| Failure Patterns | AI Insights, error grouping | Identifies persistent and emerging failures across runs |

| Test Case Analysis | Individual test cases | Provides root cause, recommendations, and quick fixes |

| Error Grouping | Test runs, analytics | Groups similar errors by message and stack trace |

| MCP Integration | AI assistants | Connects Claude, Cursor, and other AI tools to your test data |

NoteAll AI features are enabled by default. Disable individual features or all AI analysis from Project Settings. Changes apply from the next test run.

Failure Classification

Every failed test receives an AI-assigned category with a confidence score.

| Category | Meaning |

|---|---|

| Actual Bug | Consistent failure indicating a product defect. Fix first. |

| UI Change | Selector or DOM change broke a test step. Update locators. |

| Unstable Test | Intermittent failure that passes on retry. Stabilize or quarantine. |

| Miscellaneous | Setup, data, or CI issue outside the above categories. |

- Test run AI Insights tab

- Test case AI Insights panel

Failure Patterns

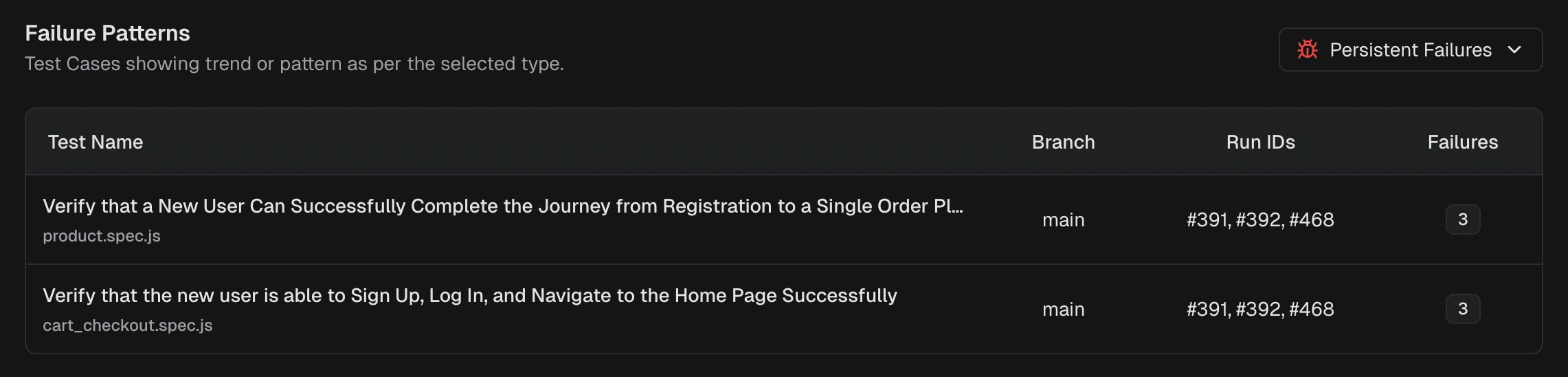

AI Insights identifies how failures behave across recent runs.Persistent Failures

Tests failing across multiple runs in the selected window. These are high-impact, recurring problems.

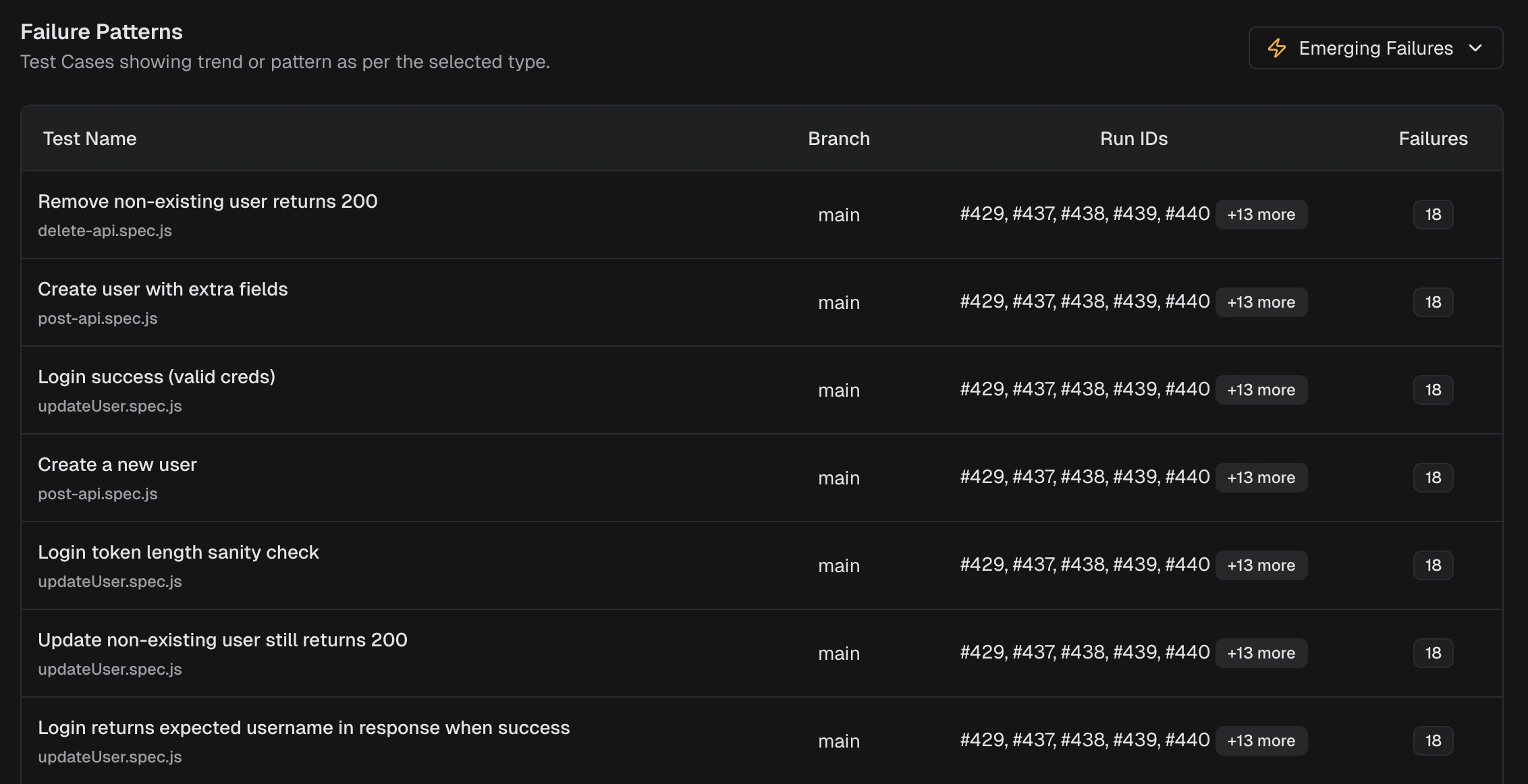

Emerging Failures

Tests that started failing recently and are appearing again. Catch regressions early. Pattern types also include:

Pattern types also include:

- New Failures: tests that started failing within the selected window

- Regressions: tests that passed recently but now fail again

- Consistent Failures: tests failing across most or all recent runs

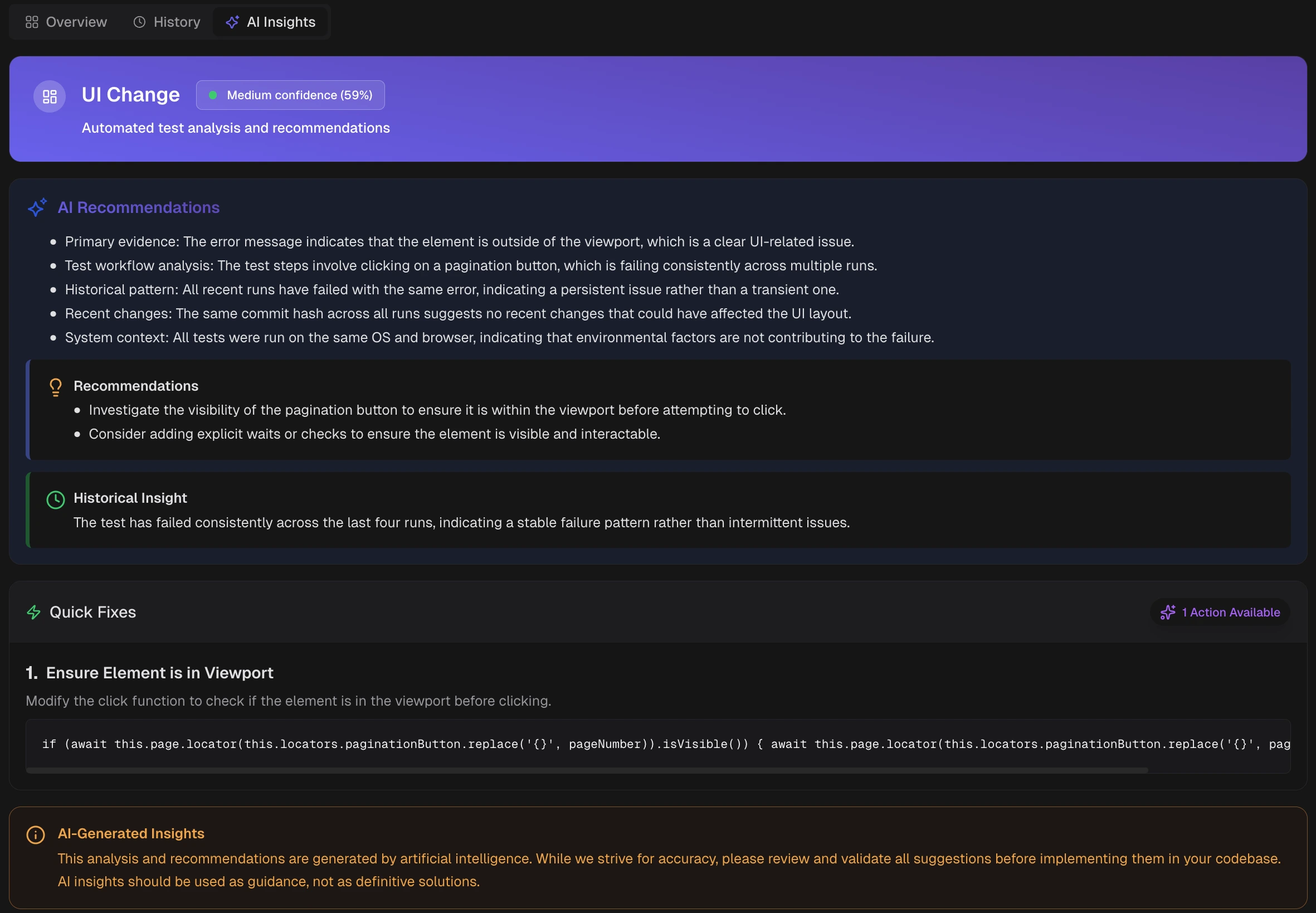

Test Case Analysis

For each failed or flaky test, AI provides a detailed breakdown.

| Section | What it provides |

|---|---|

| Category and Confidence | AI label with confidence score |

| Recommendations | Primary evidence and likely cause |

| Historical Insight | Behavior across recent runs (new or recurring) |

| Quick Fixes | Targeted changes to try first |

Error Grouping

AI groups similar errors by message text, stack trace patterns, and failure location. Error types include:- Assertion Failures

- Timeout Issues

- Element Not Found

- Network Issues

- JavaScript Errors

- Browser Issues

MCP Integration

Connect Claude Code, Cursor, or Claude Desktop to your TestDino workspace through the MCP server. Assistants query real test data, investigate failures, and suggest fixes using the same AI classification and patterns described above. See TestDino MCP Overview for setup and Tools Reference for all 12 available tools.Feed TestDino Docs to an AI Assistant

To give ChatGPT, Claude, Cursor, or any LLM full context on TestDino in one paste, use the llms.txt spec bundles. Both files are regenerated on every docs deploy.| File | Purpose | URL |

|---|---|---|

| Index | Page-by-page index with descriptions | llms.txt |

| Full content | Full markdown of every docs page | llms-full.txt |

| Per-page markdown | Append .md to any docs URL for the raw markdown source — e.g. /mcp/overview.md | https://docs.testdino.com/<path>.md |

Related

AI Insights

Cross-run failure analysis and patterns

Test Run AI

Per-run failure categorization and error analysis

Test Case AI

Individual test recommendations and quick fixes

TestDino MCP

Connect AI assistants to your test data